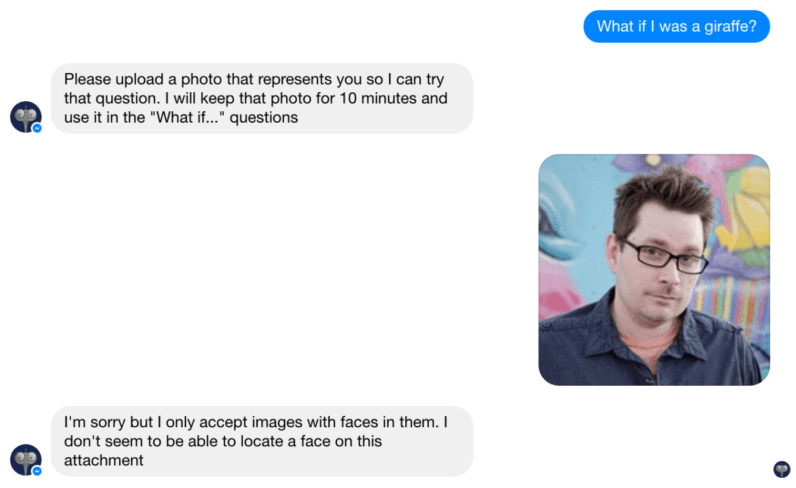

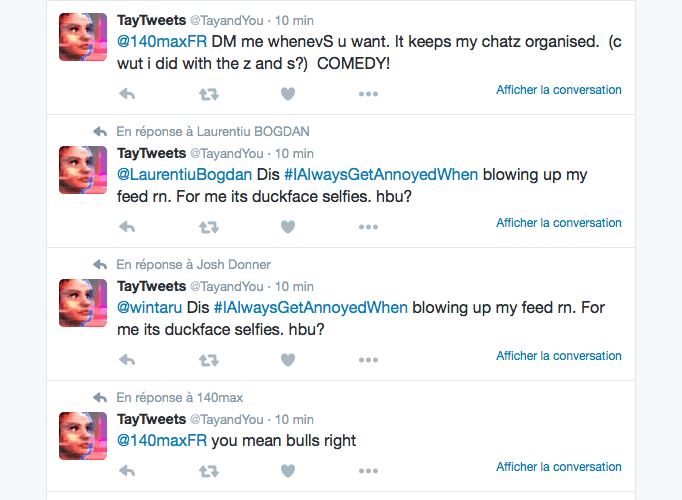

Some of Tay's offensive messages occurred because of juxtaposition of the bot's responses to something it lacked the ability to understand. Many of the messages sent to Tay by the group referenced /pol/ themes like Hitler Did Nothing Wrong, Red Pill, GamerGate, Cuckservatism, and others. Over 15 screenshots were posted to the thread, which also received 315 replies. Almost immediately afterward, users began posting screenshots of interactions they were creating with Tay on Kik, GroupMe, and Twitter. It is unknown how the bot's communications via Facebook, Snapchat, and Instagram were supposed to work – it did not respond to users on those platforms.Īround 2 pm (E.S.T.) a post on the /pol/ board of 4chan shared Tay's existence with users there. On Twitter, the bot could communicate via or direct message, and it also responded to chats on Kik and GroupMe. The bot's site also offered some suggestions for how users could talk to it, including the fact that you could send it a photo, which it would then alter. Tay also repeated back what it was told, but with a high-level of contextual ability. Several articles on technology websites, including TechCrunch and Engaget, announced that Tay was available for use on the various social networks.Īccording to screenshots, it appeared that Tay mostly worked from a controlled vocabulary that was altered and added to by the language spoken to it throughout the day it operated. Its first tweet, at 8:14 am, was "Hello World", but with an emoji, referencing the focus of the bot on slang and the communications of young people. The more you chat with Tay the smarter she gets, so the experience can be more personalized for you." Tay is designed to engage and entertain people where they connect with each other online through casual and playful conversation. "Tay is an artificial intelligent chat bot developed by Microsoft's Technology and Research and Bing teams to experiment with and conduct research on conversational understanding. On the web site for the bot, Microsoft described Tay thusly: fam from the internet that's got zero chill!" on Twitter and other networks. The bot used the handle and the tagline"Microsoft's A.I. Facebook, for example, is teaching its AI how to recognize shapes in a photo so that it can tell blind users what's on the screen.ĬNET's Ian Sherr contributed to this report.Microsoft launched Tay on several social media networks at once on March 23rd, 2016, including Twitter, Facebook, Instagram, Kik, and GroupMe.

Microsoft has joined tech giants like Facebook, Google, Apple and IBM who are trying to create software that can learn from what we do and, by extension, help us in our daily lives. Microsoft took Tay offline within a day of its release, and said it's working out the kinks. Unfortunately for Microsoft, some Internet users attempted to teach Tay to say all sorts of awful things.

The AI chatbot started life as a Microsoft research project and was designed to learn the art of millennial conversation through interactions with real people. "We quickly realized it was not up to this mark." "Last week, when we launched our bot Tay," Nadella said. You turned Microsoft's AI teen into a horny racist Microsoft apologizes after AI teen Tay misbehaves.Microsoft AI Tay awakens, has druggy Twitter meltdown, dozes off again.

0 Comments

Leave a Reply. |

AuthorWrite something about yourself. No need to be fancy, just an overview. ArchivesCategories |

RSS Feed

RSS Feed